Large language models create certification, cost, computing capacity, and data control challenges that slow AI adoption in highly regulated industries. As governance pressure rises and certification hurdles tighten, enterprises are shifting toward small language models built for precision, auditability, and on-prem control. The future of regulated AI is not about scale for its own sake, but about deploying the right-sized intelligence that regulators can trust and operations can sustain.

Grace Wu, a data executive in the banking industry, explains that regulated enterprises need models purpose-built for domain focus, auditability, and infrastructure alignment. She says that SLMs are fast becoming the go-to choice in sectors like finance services. This shift stems from real-world use cases accelerating adoption, paired with the architectural efficiencies and governance frameworks leaders need to deploy them responsibly and at scale.

“It’s not about whether SLM or LLM is better—it’s a spectrum. For highly regulated industries, small language models are more advantageous because they’re fast, domain-focused, cost-effective, and able to run on-premises to meet privacy and compliance requirements. Ultimately, it’s a leadership decision: executives must weigh infrastructure realities, risk tolerance, and long-term governance against the hype of scale," Wu says.

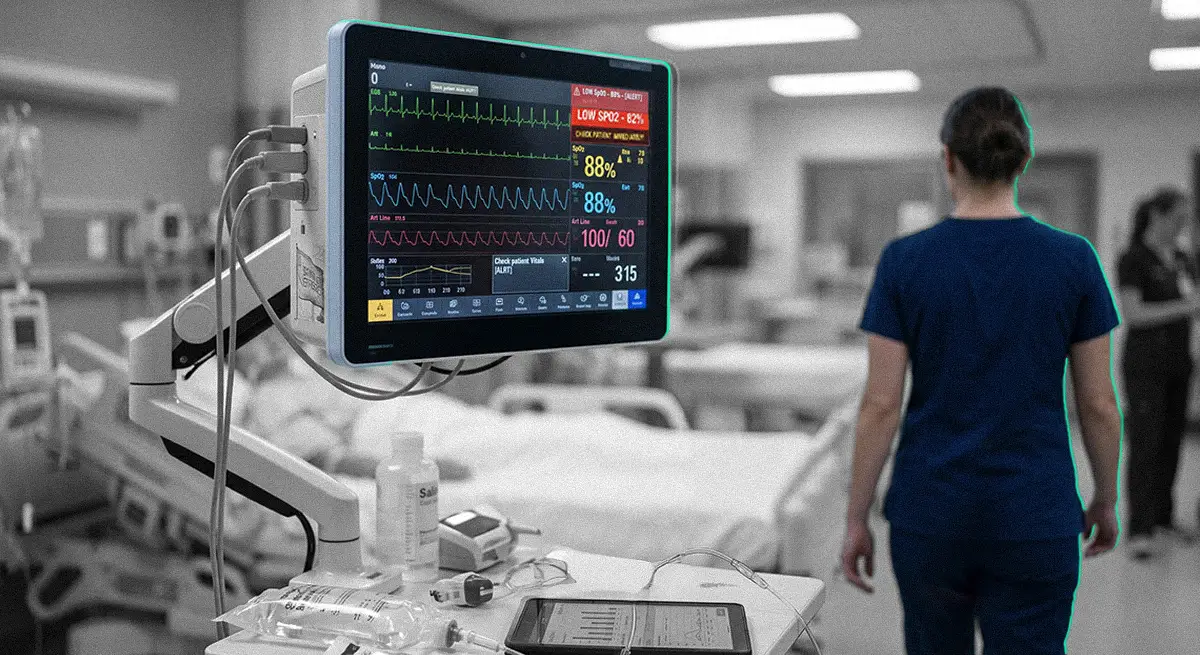

Reframing the debate: Enterprises are selecting the right model for the right job. “SLMs are dedicated to serving specific purposes. Today, especially in banking and healthcare, specialization is critical. Models are trained for credit risk analysis, financial crime prevention, client onboarding, customer due diligence, cancer diagnostics, or region-specific services in markets like Hong Kong, South Africa, or the UAE," Wu explains.

Precision over power: Specialization makes outputs predictable and easy to validate. "Wherever we apply AI, it doesn’t mean we just use AI. It’s about which AI best fits the purpose. Leaders have to consider resources, consumption, cost, and the outcome they’re trying to achieve. We are using small agents. One agent is responsible for Hong Kong customer services, another for South Africa, another for the UAE. That is critical functionality, and they cost less while being specialized,” says Wu. Cost-efficient SLMs reduce GPU strain, scaling adoption without ballooning infrastructure. Multi-agent architectures add control, with specialized agents tuned to geography and purpose.

SLMs excel in regulated deployment: “Wherever we apply AI, it doesn’t mean we just use AI. It’s about which AI best fits the purpose. Leaders have to consider resources, consumption, cost, and the outcome they’re trying to achieve,” she says. SLMs keep sensitive data in-house, simplify compliance, reduce compute costs, enable task-specific fine-tuning, and support multi-agent architectures for regional, workflow, or specialty-focused deployment.

Most organizations operate hybrid environments where cloud and legacy systems coexist, with data and regulatory constraints requiring careful segregation. As enterprise AI matures, small language models enable specialist intelligence, assigning models to defined purposes to reduce risk, improve auditability, and ensure compliance. Leaders must balance infrastructure, compute, and integration readiness, recognizing that success depends on systems that fit real-world workflows and deliver measurable value.

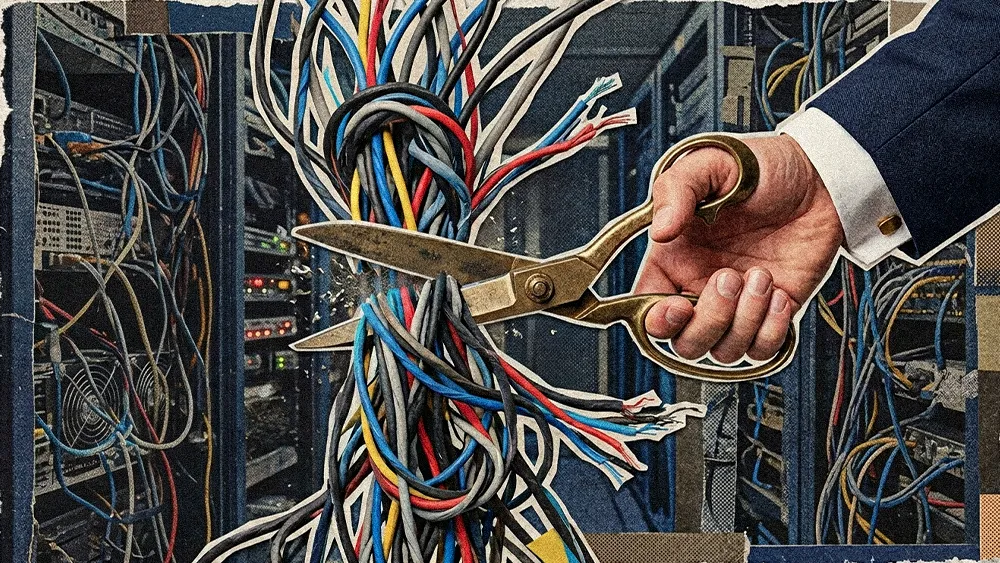

Built for the real world: “Most organizations operate legacy infrastructures alongside cloud systems. The key question is whether you can afford a brand-new workflow or need a 50/50 integration of AI and legacy. That balance allows people to adapt and the foundation to mature before full automation,” Wu adds. Financial institutions run hybrid environments where cloud and on-premises systems coexist, data can't move freely, and internal and regulatory walls require physical or logical segregation. SLMs align naturally with these constraints.

The rise of the specialist: “As AI moves from general intelligence to specialist intelligence, enterprises will dedicate models to very specific purposes. That specialization, especially in finance and healthcare, is where small language models become critical,” Wu says. Enterprise AI is maturing, moving beyond general-purpose models toward disciplined deployment, where multiple specialized models reduce risk, improve auditability, and align with governance and regulatory requirements.

The leadership imperative: Leaders must assess infrastructure, compute capacity, integration tolerance, and long-term roadmaps, recognizing that while some organizations move quickly, others face multi-year adoption cycles. “The real challenge is integration with legacy systems. Success depends on building intuitive, predictable systems that fit into real-world workflows, not just chasing the newest model,” Wu explains. The real bottleneck is often integration, as AI must fit workflows, coexist with legacy systems, and deliver measurable value.

SLM adoption demands confidence, careful integration, and an understanding of how open-source evolution shapes practical deployment. Leaders succeed by prioritizing fit, specialization, and disciplined orchestration over the allure of the biggest or most hyped model. "SLMs are not a fad to be deployed because everyone is talking about them. They are a tool to be mastered, integrated, and aligned with purpose. The right AI in the right place delivers far more value than the most powerful AI applied blindly,” Wu concludes.