Enterprise AI adoption rarely collapses because the model fails. It collapses because the people meant to use the system never helped shape it. Across industries, engineering teams build technically sound tools in isolation, only to see adoption stall when real users encounter workflows, questions, or decisions the builders never accounted for.

Richard Harris Jr. is Vice President and Head of Data and AI for North and South America at Valtech, the experience innovation company that helps global brands drive growth through data strategy, advanced analytics, and AI. His work spans enterprise data platforms on GCP, Azure, and Databricks, LLM deployments for conversational analytics, and predictive models for marketing optimization. After years building enterprise AI systems, Harris sees the same misconception appear again and again in how organizations frame the technology.

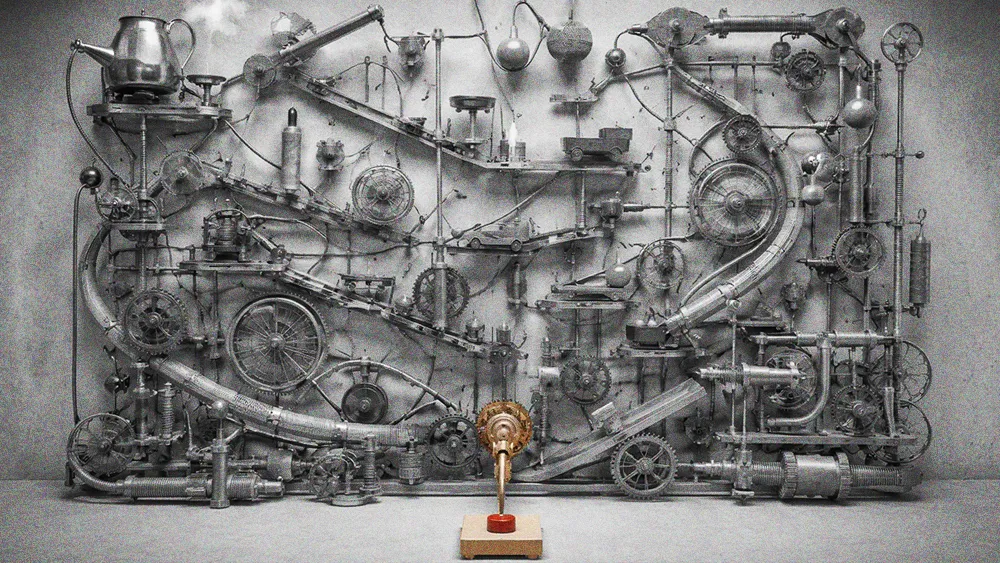

"AI should be embedded throughout the workflow as an accelerator for human decision-making, not treated like a standalone chatbot you bolt onto the business." Harris frames the current moment with an analogy. Talking about chatbots, he says, is like talking about light switches when electricity was first discovered. Organizations that fixate on chat interfaces miss where AI creates real leverage: inside engineering and operational workflows, where it accelerates development, supports earlier testing and review, and helps teams move from insight to action faster.

The execution cliff: But embedding AI into workflows exposes a harder problem. The leap from proof of concept to production is where most initiatives fall apart, and the numbers reflect it. "POCs continue to fall off the cliff because there's not a business case behind them," Harris says. "It's a cool thing that a team wanted to deploy for deployment's sake." He draws a clear line between proving a concept is possible and hardening it for production. Before an AI initiative reaches MVP, the team needs to align on the core platform, agent framework, security and governance model, and the long-term operating model for production. "All of those decisions have to be made prior to that MVP phase. A lot of companies skip that."

Production is where work starts: Even after deployment, Harris warns against treating AI systems as finished products. "Every single user is going to prompt the engine in a different way," he says. "So there's always going to be refinement. What kind of logging do you have for every query? How are you gathering feedback? You can't set and forget any AI deployment in production." He compares it to running a marketing campaign: creative alone does not matter if targeting, measurement, and optimization are not continuously managed.

The deepest failure point Harris identifies is stakeholder exclusion. When AI engineers and data scientists build systems without early input from the business users who will actually rely on them, the result is a tool that works for the builder but not for anyone else.

History repeats: Harris compares the current AI adoption challenge to the BI dashboard era. "A data analyst built a dashboard to work for them, adding filters and writing SQL to see data a certain way. But when we handed that dashboard to a business team, they weren't going to interact with all of those filters. It wasn't working," he says. "It's very similar in the AI space."

Trust disappears fast: "Adoption fails as soon as trust is lost," Harris says. "If it works really well for the 50 questions you built the system around but doesn't work for follow-up questions, trust is lost. People aren't going to use it anymore."

Left brain meets right brain: Harris argues that functional AI requires a convergence of engineering and design thinking that most teams are not structured to deliver. "We have to be strong with our data engineering, making sure the architecture is outlined and the functionality is there. But a lot of data scientists and AI engineers don't think the way a creative thinks about UI and UX," he says. "The AI space is making these two left-brain, right-brain people collide in the middle."

In Harris's experience, a large share of AI initiatives break down before they create durable value because business stakeholders are not aligned early enough. Even when the underlying system works, adoption can still fail if the interface and workflow do not make sense to the nontechnical end users.

The path forward, Harris says, is not more experimentation but better execution at every stage. "How do we take use cases from the conceptual phase into a production-ready environment, and then how do we maintain what's in production long term?" he says. "These are all problems we're seeing. But they're all opportunities for us to fix."