The baseline resource requirements for modern software keep climbing. Every few years, the minimum compute needed to run production workloads resets upward, and the gap between what shared virtual environments can deliver and what AI-driven applications actually consume is getting harder to bridge. For a growing number of enterprise architects, that gap is the signal to move off hyperscaler VMs entirely.

Musanam Shah is Director of Infrastructure and Cyber Security at URU Systems, a technology consultancy focused on cloud modernization, DevSecOps, and cybersecurity governance. With a background spanning database engineering, solutions architecture, and infrastructure delivery across sectors including transit, healthcare, and financial services, Shah advises organizations on how to rethink infrastructure economics as workloads grow more demanding.

"Every few years, the minimum resources we need keep increasing. It's very hard to find virtual appliances that give you the same amount of resources that a bare metal will give you," says Shah. His argument is grounded in a simple hardware reality: shared-tenancy environments impose resource ceilings that dedicated infrastructure does not.

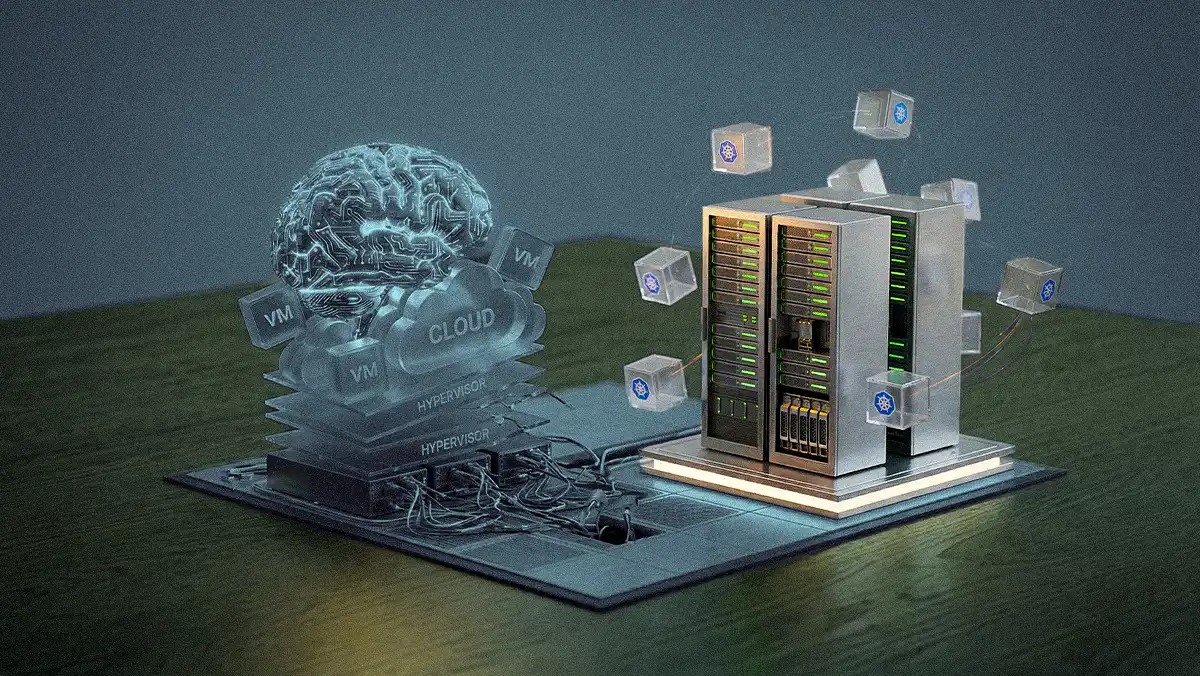

The 30% overhead problem: Hypervisor layers add roughly 30% in resource overhead to virtualized workloads, Shah explains. For predictable, always-on applications, that overhead translates directly into wasted spend. "By moving from a VM or hypervisor platform into containerization on bare metal, we were looking at reducing the bill 70%," he says, describing a client engagement where hundreds of VMs were replaced with Kubernetes-managed clusters on dedicated hardware. The workloads did not require the isolation guarantees that VMs provide, making the migration straightforward.

AI breaks the shared-tenancy model: The resource demands of AI applications are accelerating the shift. "AI is just so resource intensive. It needs full commitment from the hardware," Shah says. In shared VPS environments, providers cap sustained resource usage and throttle tenants who exceed burst limits. AI workloads, which need consistent access to GPU and compute, cannot operate under those constraints. "You get small bursts, one or two hours a day. If you keep consuming, they kick you off and tell you to move to a bare metal instance."

Shah points to a major social media platform migration to illustrate how this plays out at scale. The platform was running MongoDB on AWS EC2 instances, and the cost had become, in Shah's words, difficult to justify. A 19-person team that included four solutions architects and multiple SREs migrated the database workload to Oracle Cloud Infrastructure bare metal servers managed through Kubernetes in four months. The decision came down to economics: hyperscaler VMs carry a cost premium that compounds at scale, and for a database workload with predictable resource needs, dedicated hardware was the better financial fit.

AI ops lowers the barrier: What makes this shift viable for smaller organizations is that AI tooling now handles infrastructure management tasks that previously required dedicated DevOps teams. Shah describes a colleague who built an on-premises data center for his development company in Pakistan, something he never would have considered before. "He said, 'Now I have AI bots that help me manage my infrastructure and reduce my cost for having a managed operations team do this for me,'" Shah recounts. The economics of self-managed infrastructure have changed fundamentally now that a single AI subscription can replace portions of what once required full-time staff.

Where VMs stay: Shah is clear that the shift has limits. Regulated industries operate under compliance frameworks that mandate isolated environments. "Healthcare has HIPAA, HL7, ISO 27001. Your application has to be in its own dedicated virtual environment or separate server. They cannot coexist in a container on a single machine," he says. Government and public sector organizations face similar requirements. For these workloads, hyperscaler VMs remain the default and legally required architecture.

The broader pattern Shah describes is not a rejection of virtualization but a correction. For more than a decade, the industry assumed that abstracting hardware away from developers was always the right call. AI workloads, with their appetite for dedicated compute at predictable cost, are forcing architects to revisit that assumption.

"Raw performance is going to overtake what held the spot for the past ten to fifteen years," Shah says. "We're going back to where we started: dedicated resource systems. And companies are already making commitments for the next three to four years of dedicated hardware, because the cost of that hardware is going up too."